Zensark AI Division

July 16, 2024

In the ever-evolving world of artificial intelligence, a new technique is making waves: Retrieval-Augmented Generation (RAG). This innovative approach is set to revolutionize how AI language models generate responses, promising more accurate, reliable, and informative outputs. Let’s dive into what RAG is, how it works, and why it matters.

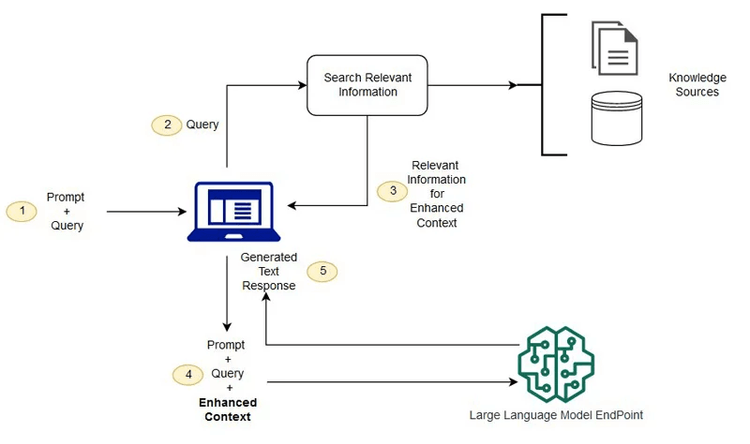

RAG is a method that enhances the capabilities of large language models by incorporating external knowledge sources. Think of it as giving your AI a powerful research assistant. Instead of relying solely on pre-trained data, RAG allows AI to access and integrate relevant information from external databases or documents when generating responses.

The RAG process can be broken down into three key phases:

RAG offers several significant advantages over traditional AI language models:

While both RAG and fine-tuning have their places in AI development, they serve different purposes. RAG excels when tasks require access to external knowledge and benefit from contextual understanding. It’s ideal for applications like question-answering systems, dialogue models, and content generation.

Fine-tuning, on the other hand, is better suited for specialized tasks within a specific domain, such as sentiment analysis or named entity recognition. It offers faster response times but may lack the breadth of knowledge that RAG provides.

RAG is already finding its way into various applications:

As we continue to push the boundaries of what AI can do, techniques like RAG are paving the way for more human-like interactions with AI systems. By addressing common AI challenges such as bias and misinformation, RAG is not just improving the performance of language models – it’s making them more trustworthy and useful in real-world applications.

The potential of RAG is vast, from transforming how we interact with search engines to revolutionizing content creation and knowledge management. As this technology continues to evolve, we can look forward to AI systems that are not just more intelligent, but also more reliable, contextually aware, and truly helpful in our day-to-day lives.

In conclusion, Retrieval-Augmented Generation represents a significant leap forward in AI language model technology. By bridging the gap between vast knowledge sources and generative AI, RAG is setting the stage for a new era of intelligent, informative, and trustworthy AI interactions.

In conclusion, Retrieval-Augmented Generation (RAG) marks a major advancement in AI language models by integrating vast external knowledge with generative AI. This innovation promises more accurate, reliable, and contextually aware interactions.

If you are looking for impactful insights or wish to learn more about RAG, please reach out to us at info@zensark.com.

Contact us today, and we will schedule a free consultation to find the right engagement model for your business needs.